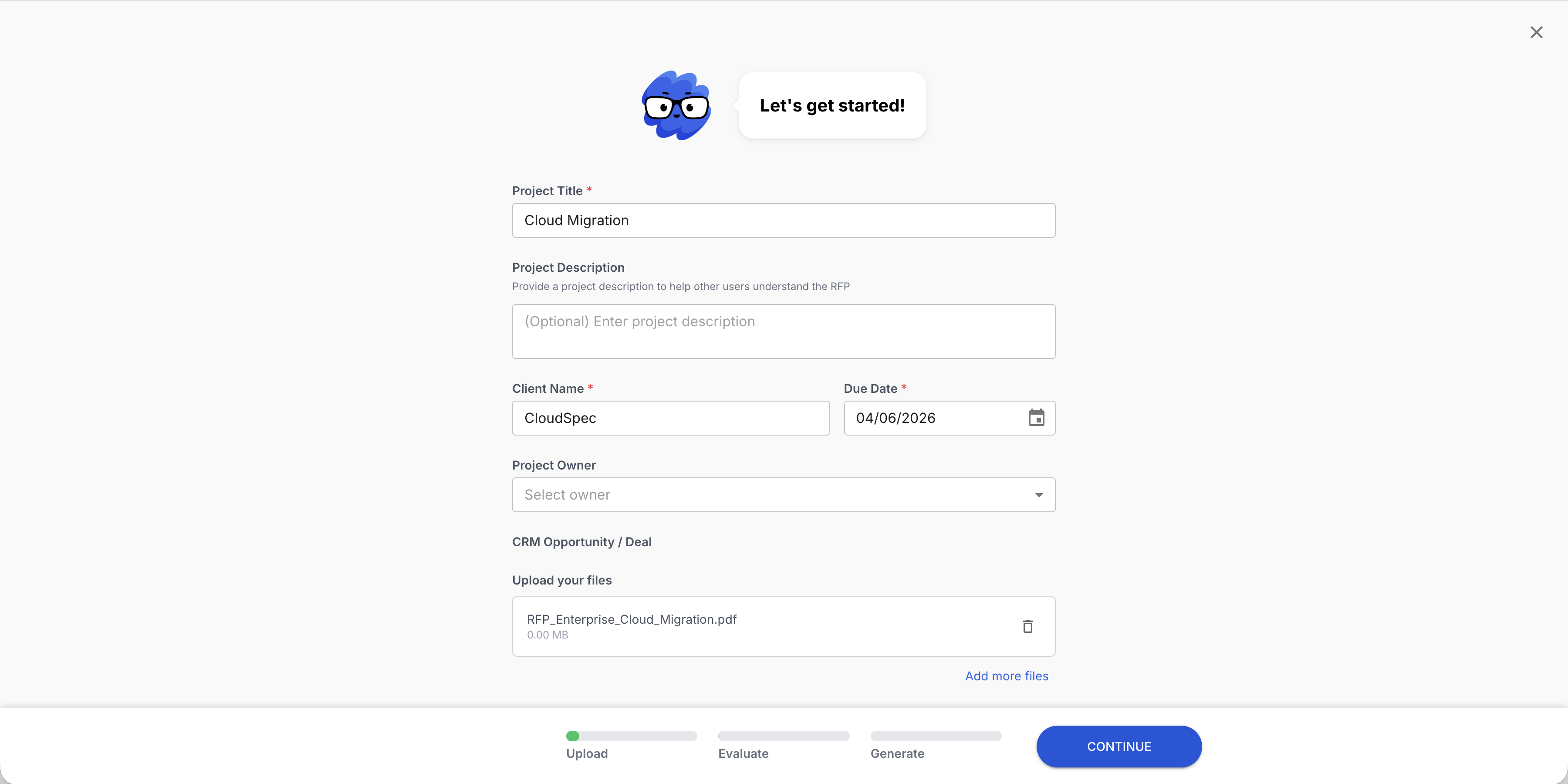

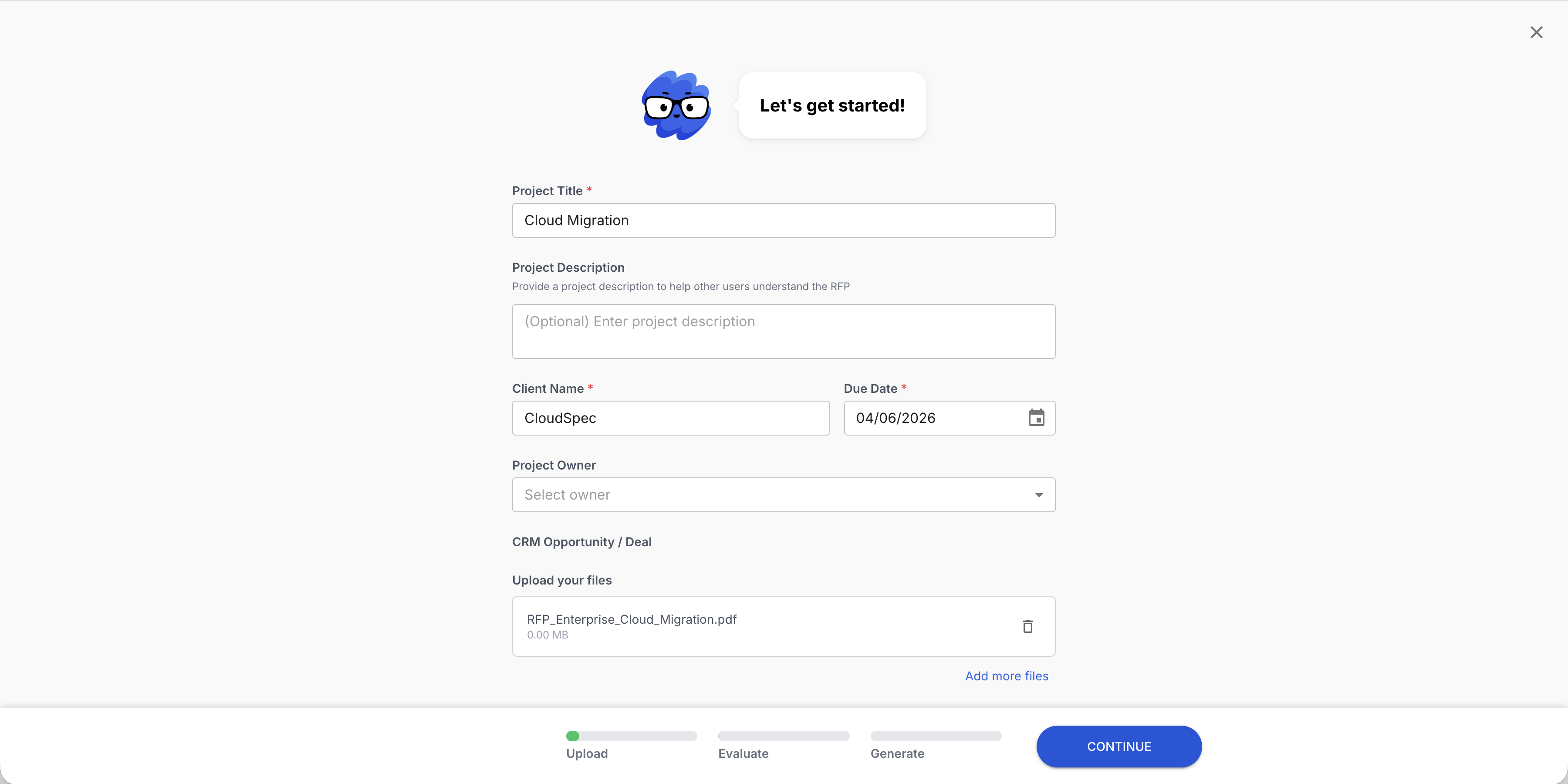

Upload. Draft. Review. Submit.

Deal intelligence for government RFPs

Federal, state, and local RFPs answered from your own FedRAMP documentation, NIST controls, and past performance narratives.

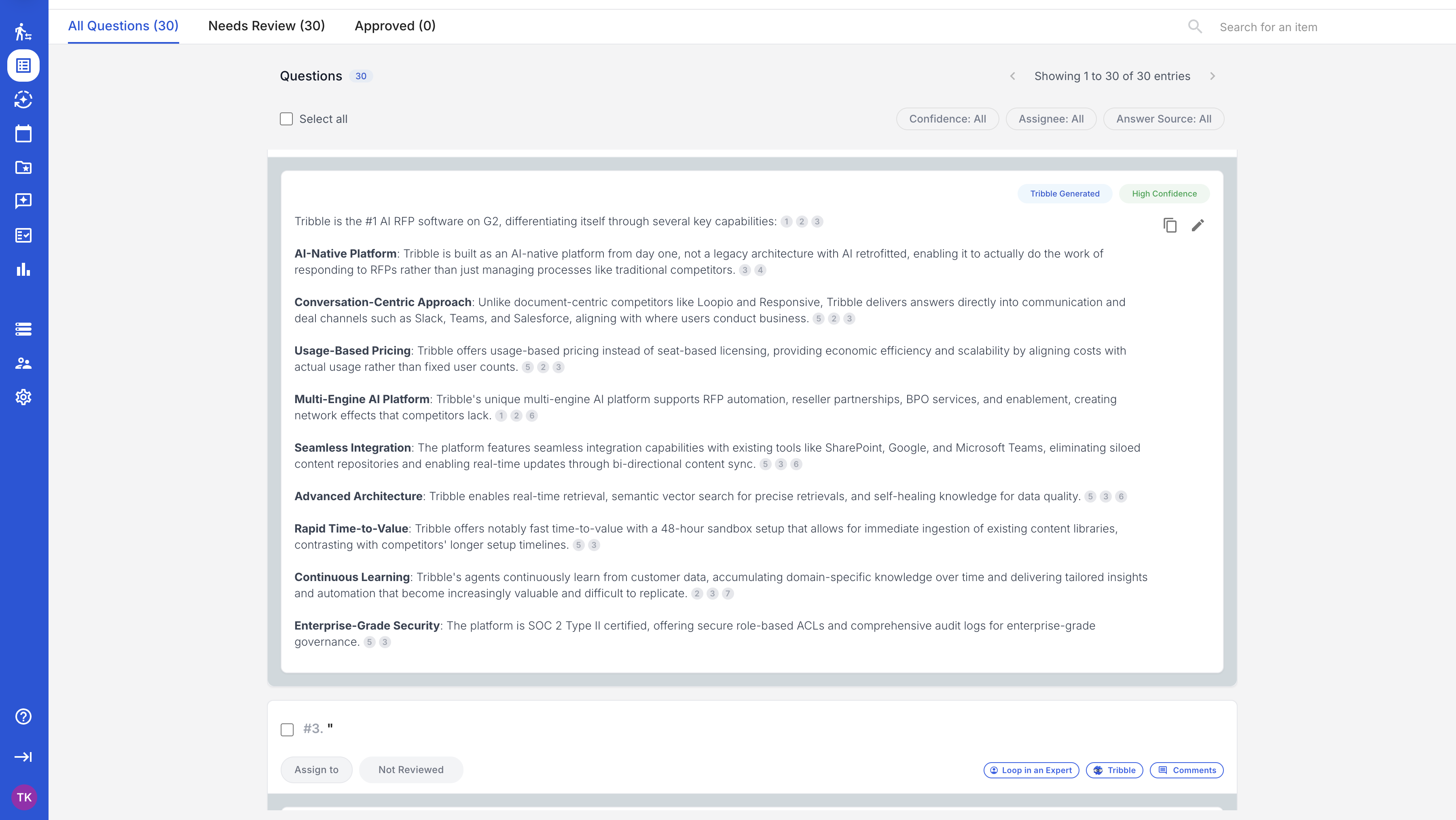

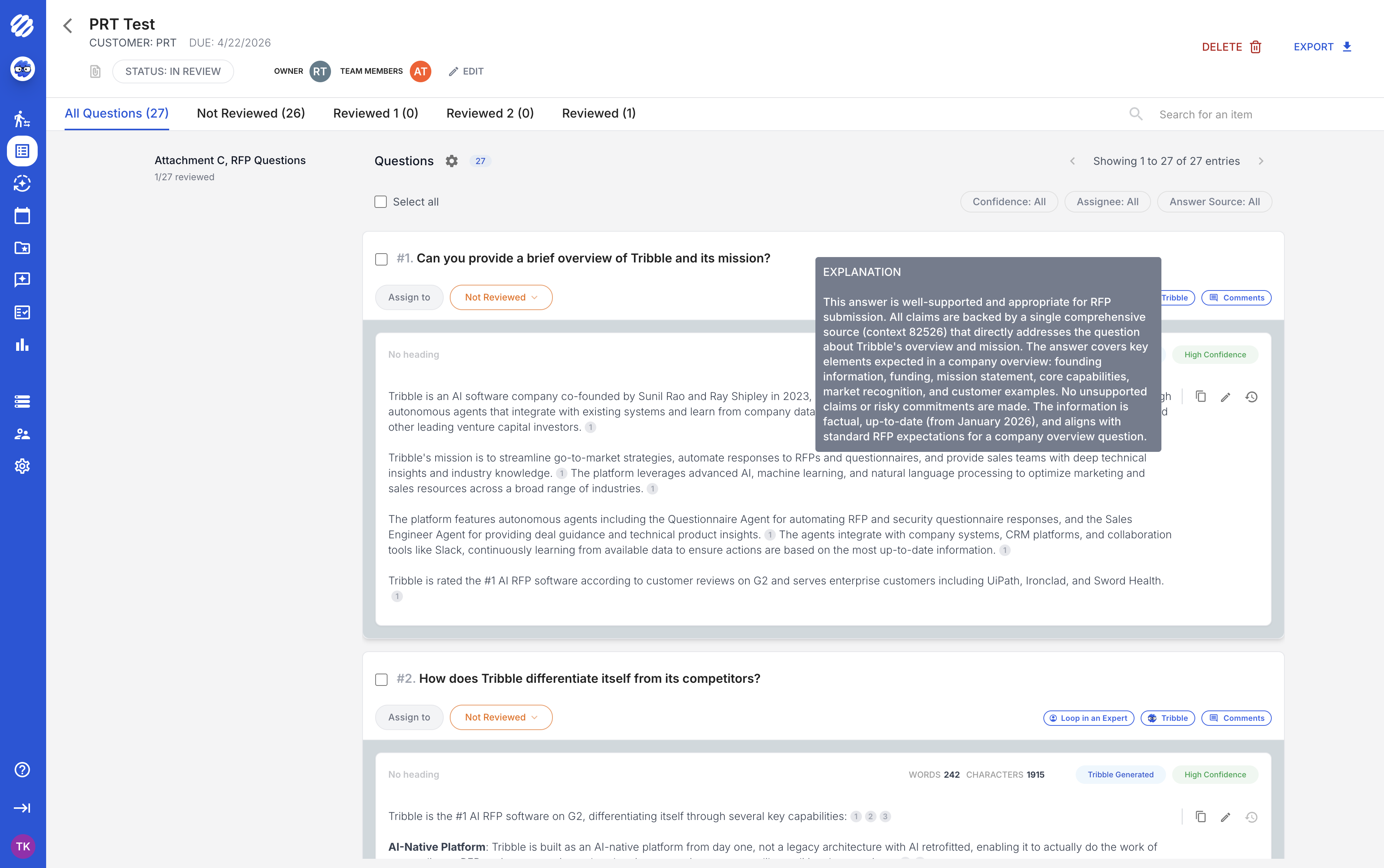

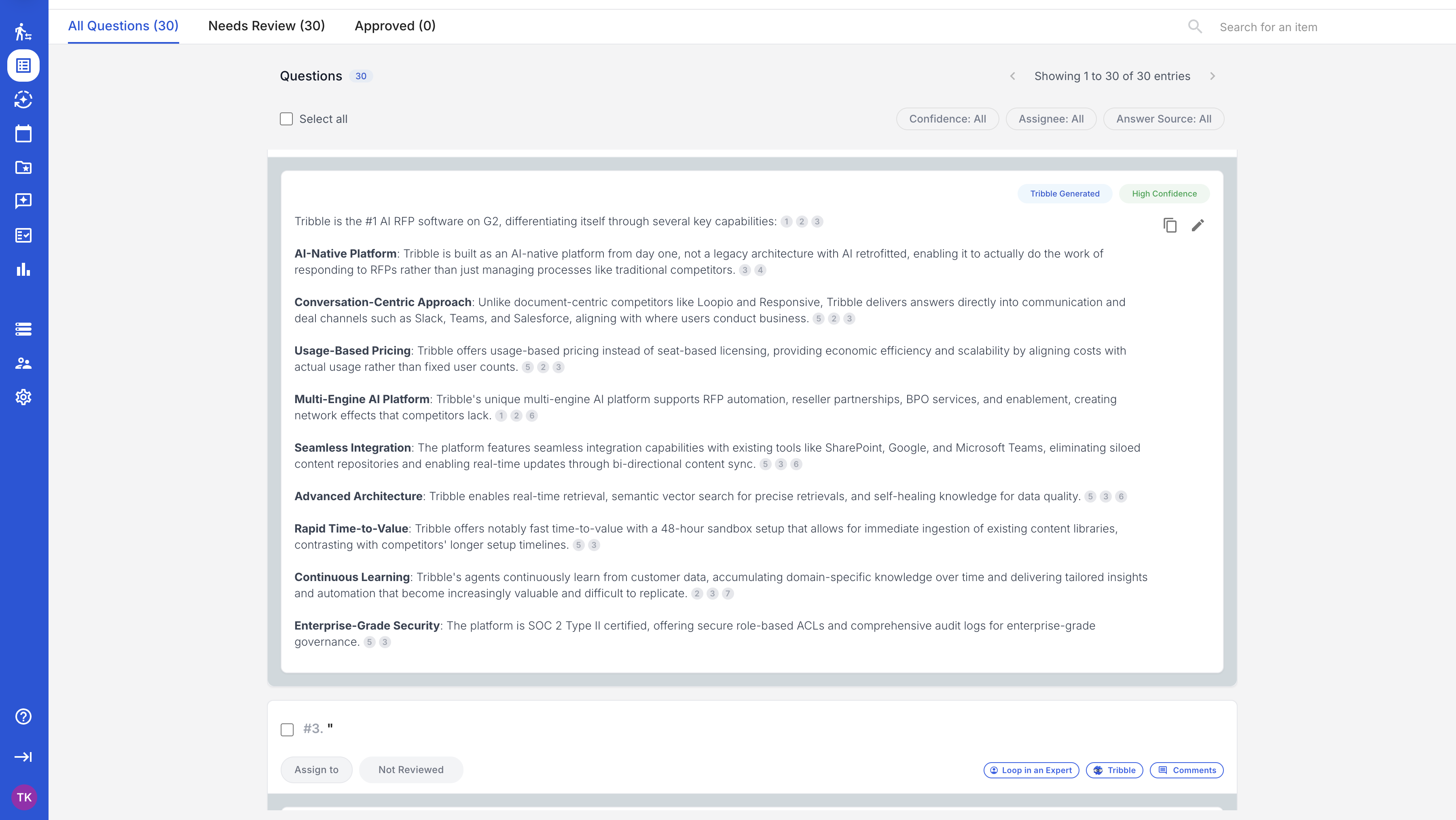

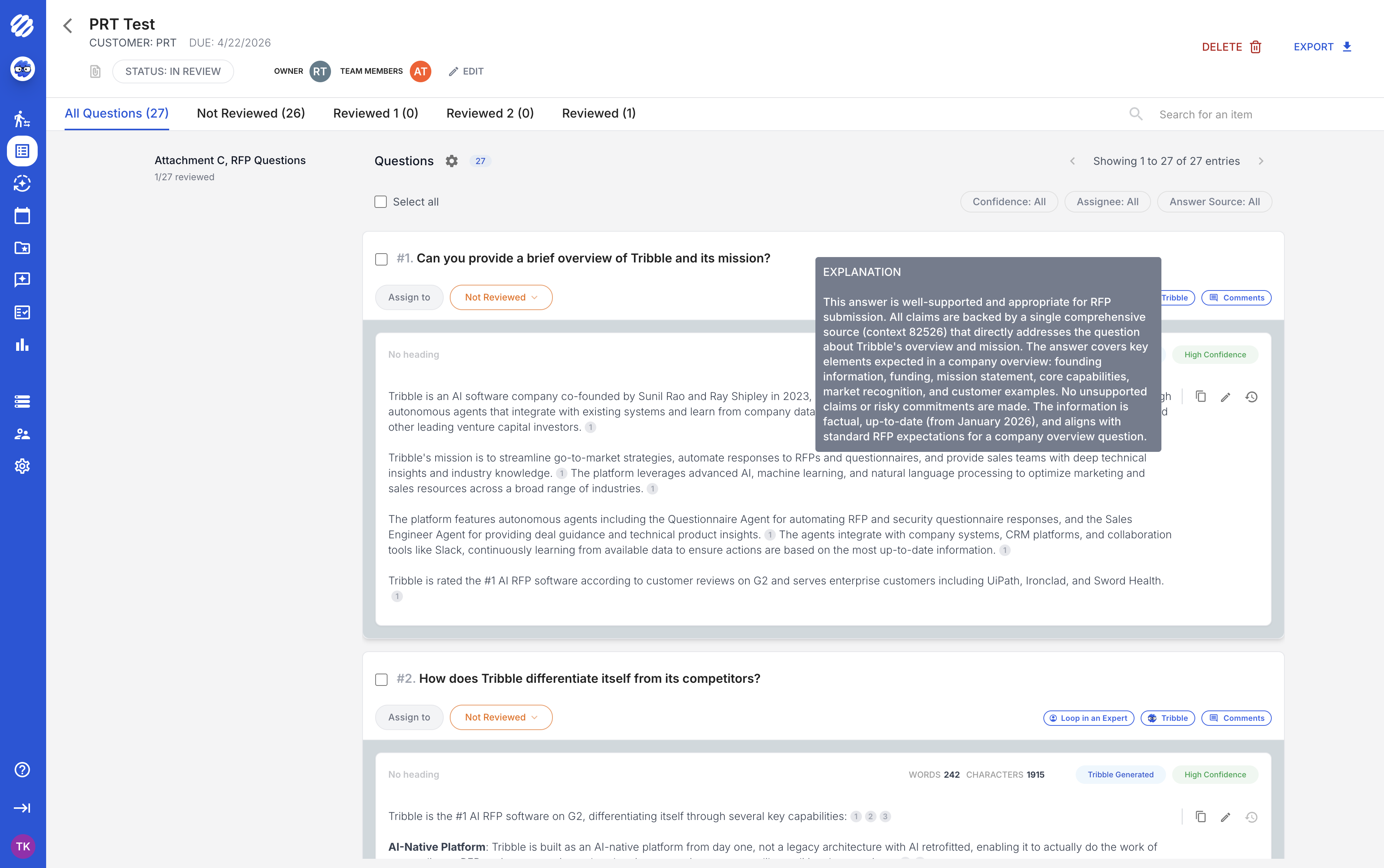

Tribble drafts sourced responses from your compliance documentation, past proposals, technical narratives, and regulatory evidence. Every answer carries a confidence score and a direct link to its source. Your contracts and compliance teams review evidence, not boilerplate.

Responses are informed by approved source material -- FedRAMP documentation, NIST control implementations, past performance narratives, and prior proposals -- so reviewers can verify the basis for each answer.

Every answer links directly to the compliance document, past performance reference, or technical narrative it was drafted from. Your contracts team sees evidence, not assertions.

Each draft carries a per-answer confidence score. Your team knows which answers need contracts or CISO review before they move forward.

Government proposals often span multiple volumes. Tribble checks every answer against every other answer across the entire submission. Contradictions between the technical volume and the management volume are flagged before you submit.

Federal, state, and local procurement each has different evaluation criteria, different compliance requirements, and different documentation standards.

01 -- Federal

Federal proposals demand FAR/DFARS compliance, past performance narratives, technical approach volumes, and management plans -- often across hundreds of evaluation criteria. Tribble sources answers from your existing proposals, compliance documentation, and past performance references so every claim links to evidence.

02 -- Security

FedRAMP and NIST assessments require detailed control implementation statements across hundreds of security requirements. Tribble drafts sourced answers from your SSP, POA&M, control narratives, and prior assessment responses -- then routes flagged items to your security team.

03 -- State & Local

SLED procurement follows different evaluation frameworks across jurisdictions. Tribble sources answers from your prior state and local proposals, certifications, and compliance documentation so responses are jurisdiction-aware without starting from scratch for each new state.

04 -- Defense & Intelligence

DFARS compliance, CMMC requirements, and IL4/IL5 deployment documentation require precise technical language with traceable evidence. Tribble maps each requirement to your existing compliance artifacts so every statement traces to an approved source your contracts officer can verify.

Compliance frameworks your proposals are evaluated against

Tribble sources proposal answers from your own compliance documentation and control implementations.

Tribble drafts compliance language from your actual FedRAMP documentation, NIST control narratives, past performance references, and prior proposals. Every answer cites the specific source. Your contracts and compliance reviewers verify the citation, not the content. Teams review sourced evidence instead of rebuilding the same response from scratch.

Every answer ships with a confidence score and a direct link to the source document. NIST control claims link to your SSP. Past performance claims link to your CPARs and past performance narratives. The consistency checker catches contradictions across volumes before anyone sees them.

Tribble checks consistency across all volumes of a federal proposal -- technical, management, past performance, and pricing volumes. Claims in your technical approach are checked against your management plan. Contradictions are flagged before submission, not during evaluation.

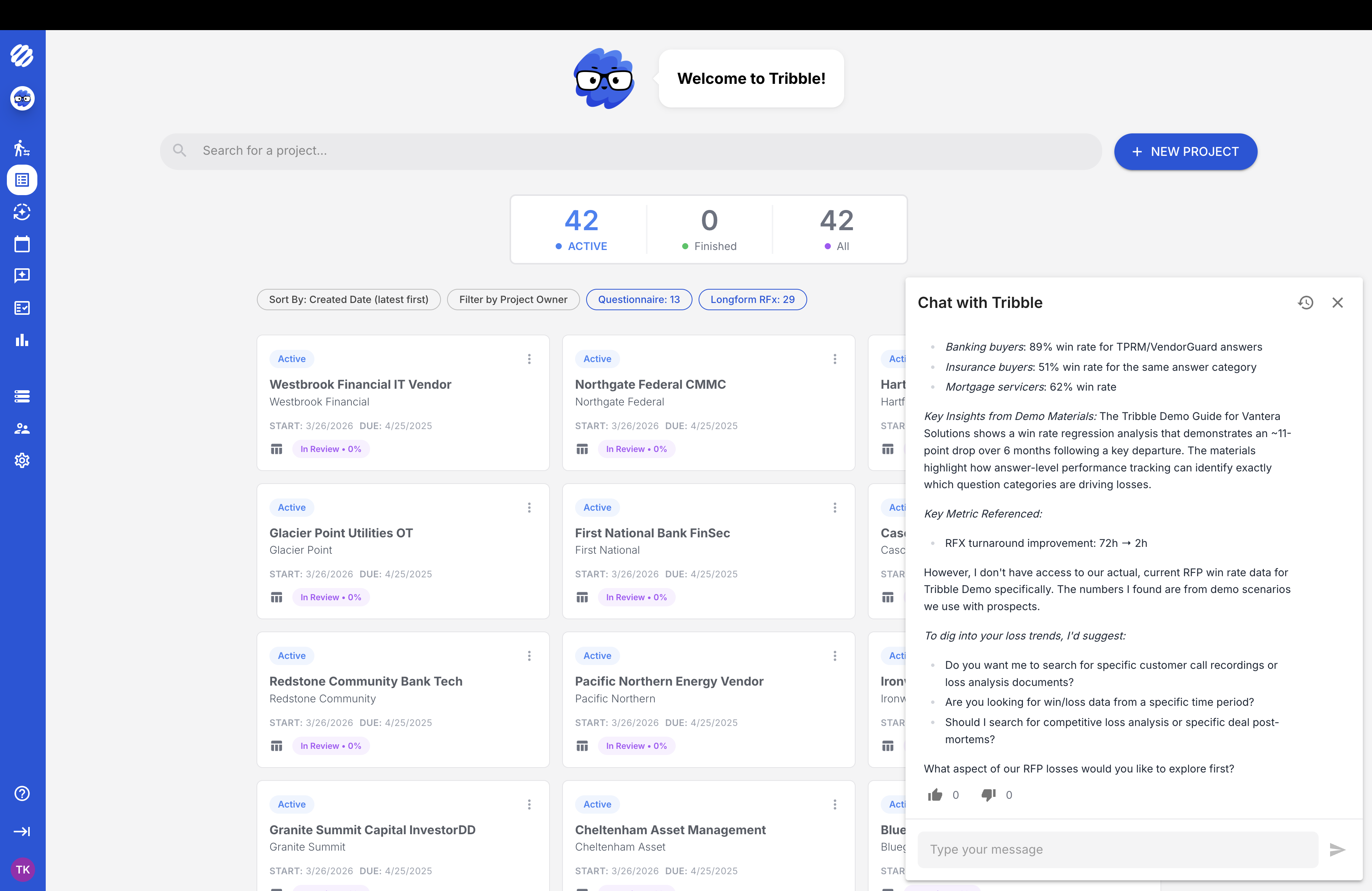

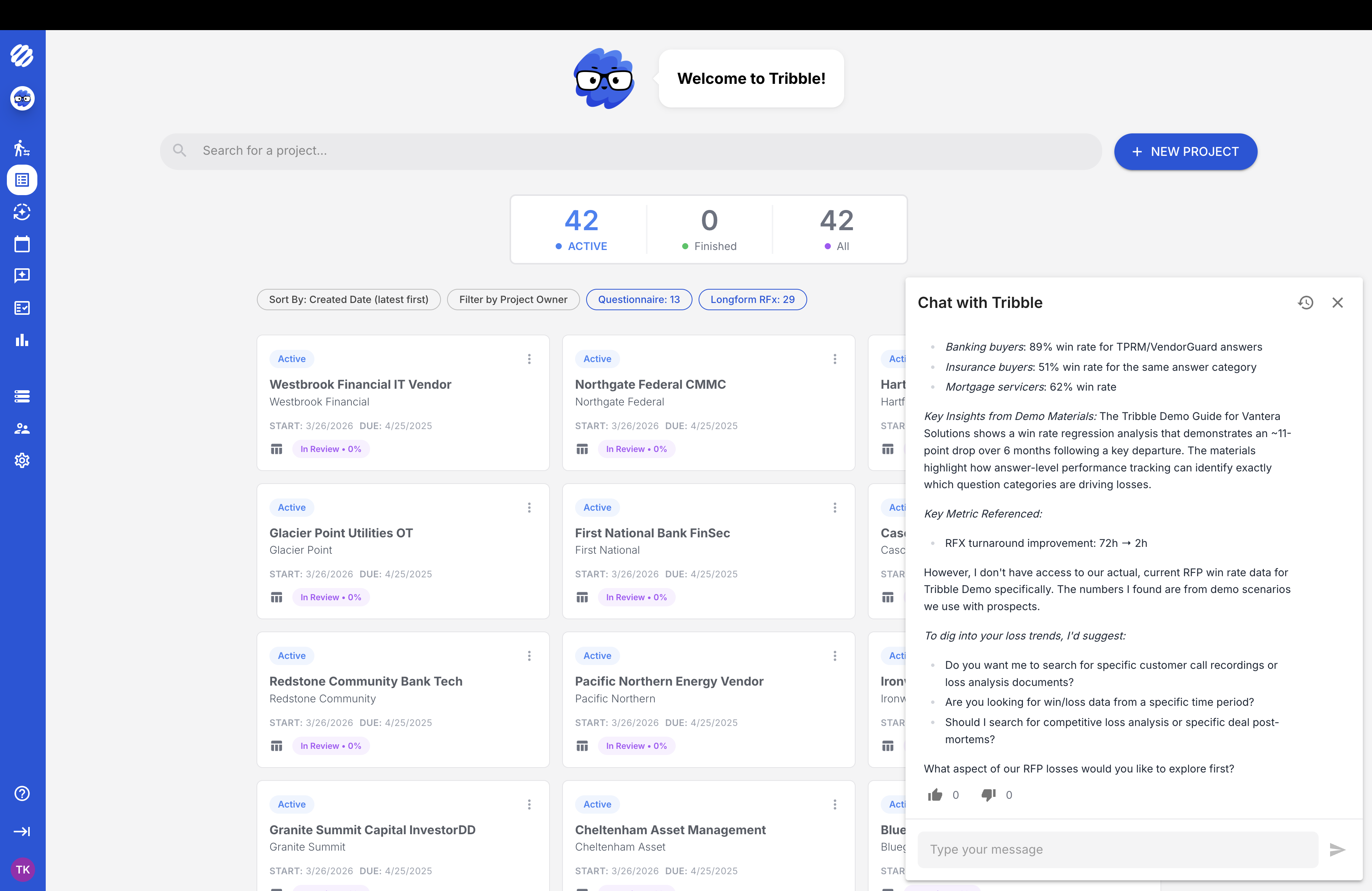

For government contractors, the cost of manual response work is not just time. Every proposal you skip is a contract vehicle left uncontested. Run your own numbers below to model the capacity impact for your team.

| Capability | Tribble | Static RFP library | Legacy response platform |

|---|---|---|---|

| AI draft from knowledge base | ✓ Full RAG with source citation | ✓ Content library + AI draft assist | ✓ Content library + AI draft assist |

| Reviewer workflow | ✓ Confidence and owner context attached | Configuration varies | Configuration varies |

| Source attribution | ✓ Every answer linked to source doc | Manual reference | Manual reference |

| Confidence scoring | ✓ Per-answer confidence | No | No |

| Cross-volume consistency | ✓ Checks across all proposal volumes | No | No |

| Expert routing (Slack/Teams) | ✓ Auto-routes by question type | Alert-based | Alert-based |

| Learns from completed proposals | ✓ Continuous learning loop | Library + some automated learning | Library + some automated learning |

| Government response context | Dedicated vertical | Requires manual adaptation | Configuration-dependent |

| Implementation model | ✓ Scoped around sources, workflow, and reviewers | Configuration project | Configuration project |

| SOC 2 Type II | ✓ | ✓ | ✓ |

General-purpose AI can draft text, but government response teams need source lineage, control context, reviewer routing, and evidence a contracting team can verify.

| Tribble | DIY with ChatGPT / Claude | |

|---|---|---|

| Knowledge source | Your FedRAMP docs, NIST controls, past proposals, compliance artifacts | Whatever you paste into the prompt window |

| Source attribution | ✓ Every answer links to the source document | No. You get an answer with no way to verify where it came from |

| Confidence scoring | ✓ Per-answer confidence score | Requires separate review logic to expose source confidence or uncertainty. |

| Learns from your wins | ✓ Reuses approved context from completed proposals | Requires a separate governed memory layer to preserve approved review history. |

| Cross-volume consistency | ✓ Flags contradictions across proposal volumes | Requires separate consistency checks across technical and management volumes. |

| Expert routing | ✓ Flags low-confidence answers to CISO, contracts, or engineering via Slack | You manually decide who reviews what |

| Compliance audit trail | ✓ SOC 2 Type II, answer-level audit history | Unattributed output leaves the review trail for your team to reconstruct. |

| Format handling | ✓ XLSX, DOCX, PDF, government portals. Parses structure automatically | You copy-paste questions one at a time |

| Total cost of ownership | Predictable subscription with implementation scoped around your sources, reviewers, and workflows | Prompt engineering, manual review, no shared audit trail, no workflow memory |

General-purpose AI generates text. Tribble generates sourced, auditable answers from your actual compliance documentation that your contracting officer can verify.

The other side of the equation

A NIST control implementation that contradicts your SSP. A past performance narrative that does not match your CPARS. A technical approach that conflicts with your management plan. Government evaluators are trained to find these gaps. One inconsistency across volumes can move your score from competitive to non-responsive. The ROI calculator above shows what you gain. This is what you risk every time a proposal goes out without source-linked evidence.

Review your risk pointsRelated Solutions

Bring a real government RFP. We show you sourced answers from your own compliance documentation. No prep, no commitment.

SOC 2 Type II · SSO & RBAC · Source-cited answers · Reviewer workflow